In a significant shift from its previous content moderation strategy driven by government influenced narrative support, Meta, the parent company of platforms like Facebook, Instagram, and Threads, has announced the termination of it’s biased third-party fact-checking program in favor of a system reminiscent of X’s community notes.

This change, effective immediately in the U.S., mirrors the model popularized by Elon Musk’s platform X.

Let’s take a deeper look into what this upgrade actually is, the rationale behind it, and its potential impacts for the platforms and other social media.

The Shift from Fact-Checkers to “X” Style Community Notes

Meta’s decision to abandon its corrupted fact-checking program comes after years of criticism regarding the revealed political bias of its fact-checkers and government influence.

Joy Reid is ignorant! Zuckerberg said that the ‘fact-checkers’ were “too politically biased & destroyed more trust than they created.” He’s switching to ‘community notes’ like X.

Joy Reid doesn’t want a fair system. She wants biased propaganda aimed against Conservatives. pic.twitter.com/oywfSIUxqS

— LionHearted (@LionHearted76) January 8, 2025

READ: Disney Doubles Down on Its Support of “The View” Even as Groups Call for Sunny Hostin’s Removal

Mark Zuckerberg, Meta’s CEO, has been vocal, especially recently, about the fact that these “third-party” moderators often introduced their own biases into the content moderation process, leading to what some consumers perceived as explicit censorship rather than correction of misinformation.

In response to this loud and growing criticism, Meta is adopting a system where the responsibility for finding and calling out misleading or false information is handed over to the users themselves.

Elon Musk via AutismCapital on X

“We’ve seen this approach work on X where they empower their community to decide when posts are potentially misleading and need more context,” stated Joel Kaplan, Meta’s Chief Global Affairs Officer. The reality of this change aims to “democratize” content verification by allowing users from all ideological backgrounds to contribute and agree on notes that provide additional context or corrections to posts.

How Community Notes Works For Content Moderation

In this new system much like on Elon’s X system, vetted users can write notes on posts that they believe require clarification or correction.

These notes are then voted on by the community.

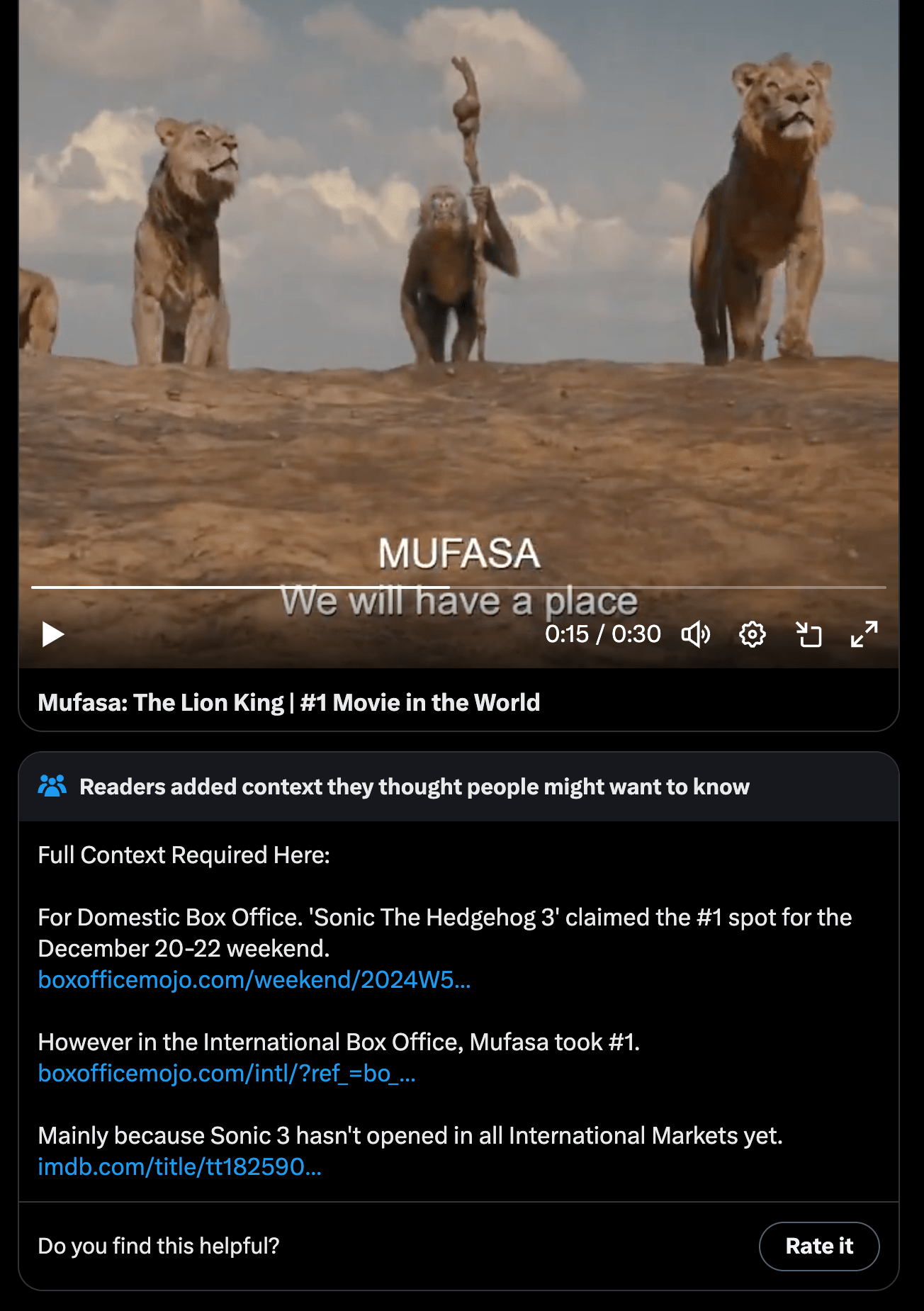

An X Community Note Attached to Disney’s Post Declaring Mufasa the Number One Movie in the World – X, Walt Disney Studios

For a note to be published and visible to all users, it must receive approval from a large group of contributors, ensuring that no single political or ideological group can dominate the narrative. This system is intended to reduce bias and increase transparency in how content is moderated on Meta’s platforms.

Implications for Free Speech and Misinformation on Meta Platforms

The broad supporters of this change argue it aligns with Meta’s original use in sharing events and free expression.

By removing what was seen as overly compromised, influenced, and restrictive content policies, Meta hopes to foster a broader range of discourse on topics like immigration and identity politics, which were previously under strict government directed moderation.

On 17 February 2020, Věra Jourová, Vice-President of the European Commission in charge of Values and Transparency, received Mark Zuckerberg, CEO of Facebook. Photo Credit: © European Union, 2024, CC BY 4.0 <https://creativecommons.org/licenses/by/4.0>, via Wikimedia Commons

Politically driven critics, however, worry that this move might lead to an increase in so called “misinformation,” especially without the oversight of “trained” fact-checkers. They argue that while community moderation can be effective, it might not match the thoroughness and expertise of “professional” fact-checkers.

Political Motives and Corporate Motives Driving this Change

This policy shift comes at a time in which Meta seems to be realigning its strategy with a more conservative-friendly or balanced approach. That’s especially notable given the recent changes in U.S. political leadership. The appointment of Joel Kaplan, a former staff member in the George W. Bush administration, as head of global policy, along with a $1 million donation to Trump’s inauguration fund, suggests an intent to curry favor with the incoming administration, which is known for criticizing social media platforms’ moderation practices.

President Donald Trump being sworn in on January 20, 2017 at the U.S. Capitol building in Washington, D.C. Melania Trump wears a sky-blue cashmere Ralph Lauren ensemble. He holds his left hand on two versions of the Bible, one childhood Bible given to him by his mother, along with Abraham Lincoln’s Bible. Photo Credit: The White House, Public domain, via Wikimedia Commons

READ: Zack Snyder’s Rebel Moon VR Game Coming From Sandbox Despite Netflix Film Failures

Another thing of note in a complete tonal shift in regards to political ideology at META platforms is the addition of a new board member: Dana White of the UFC.

META announced three new board members in total on January 6, consisting of the aforementioned White, along with John Elkann (STLA) and Charlie Songhurst (MFST). These selections for oversight indicate a broader reach across industries, venturing out and away from Silicone Valley tied management.

User Response and Platform Dynamics

The response from users in the few hours since this announcement has been mixed.

Some applaud the move toward community-led content moderation, seeing it as a step toward more open dialogue and less egregious censorship. Pro-Censorship ideologues express concern over the potential for increased harassment or the spread of unchecked “misinformation.”

Elon Musk via Real Time with Bill Maher YouTube

READ: Helldivers 2 Movie Announced by Sony, Fans Fear DEI Agenda Could Derail it

Moreover, posts on X have been highlighting both the potential and supposed pitfalls of this system, with some celebrating the change as a victory for free speech.

The Clearer and Freedom Driven Road Ahead

As Meta rolls out this “new” community notes system system blatantly borrowed from Elon Musk’s X, it will be interesting to see its success in monitoring how this affects the quality of discourse on its platforms and to what extent Meta will still kowtow to global elitists.

Mark Zuckerberg F8 2018 Keynote. Photo Credit: Anthony Quintano from Honolulu, HI, United States, CC BY 2.0 <https://creativecommons.org/licenses/by/2.0>, via Wikimedia Commons

The company has promised to monitor and refine the system over time, focusing on illegal content and “high-severity” violations while actually allowing more room for political and social discussions. The success of this model will largely depend on the community’s ability to self-regulate, the clarity of the guidelines set by Meta, and the platform’s willingness to adapt to user feedback with less concern over global regulatory ideologues, especially in regions like the EU where “misinformation” laws are stringent against actual truthful information.

Ultimately, this move by Meta to replace its biased fact-checkers with a balanced Community Notes system is a bold change of tack in platform governance while also a strategic pivot in response to political and user feedback.

Can this Meta community notes reform lead to a more informed social media environment? That remains to be seen. But it certainly marks a new chapter in the ongoing debate over content moderation in the digital age.